nginx-ipng-stats-plugin's ipng_stats_logtail directive buffers many log lines into a single UDP datagram (default buffer=64k flush=1s). The listener was treating each datagram as exactly one log line, so any datagram with N>1 lines failed the v1 field-count check and dropped silently. In production this showed up as logtail_udp_packets_received_total roughly 4x logtail_udp_loglines_success_total — matching typical burst-coalesced 4-lines-per-batch ratios. Fix: strip trailing CRLF, split the payload on '\n', parse each non-empty line independently. Counter semantics now match the names: packets_received — datagrams off the socket (one per recvfrom) loglines_success — log lines parsed OK (may be many per datagram) loglines_consumed — log lines forwarded to the store (not dropped) After the fix, loglines_success ≈ packets_received × avg_lines_per_batch. Regression test TestUDPListenerBatchedDatagram sends one datagram with three '\n'-separated v1 lines and asserts all three LogRecords arrive, plus loglines_success >= 3 * packets_received. Docs (user-guide.md, design.md) now explain the datagram-vs-line unit distinction so operators don't misread the ratio. Co-Authored-By: Claude Opus 4.7 (1M context) <noreply@anthropic.com>

PREAMBLE

Although this computer program has a permissive license (AP2.0), if you came here looking to ask questions, you're better off just moving on :) This program is shared AS-IS and really without any intent for anybody but IPng Networks to use it. Also, in case the structure of the repo and the style of this README wasn't already clear, this program is 100% written and maintained by Claude Code.

You have been warned :)

What is this?

This project consists of four components:

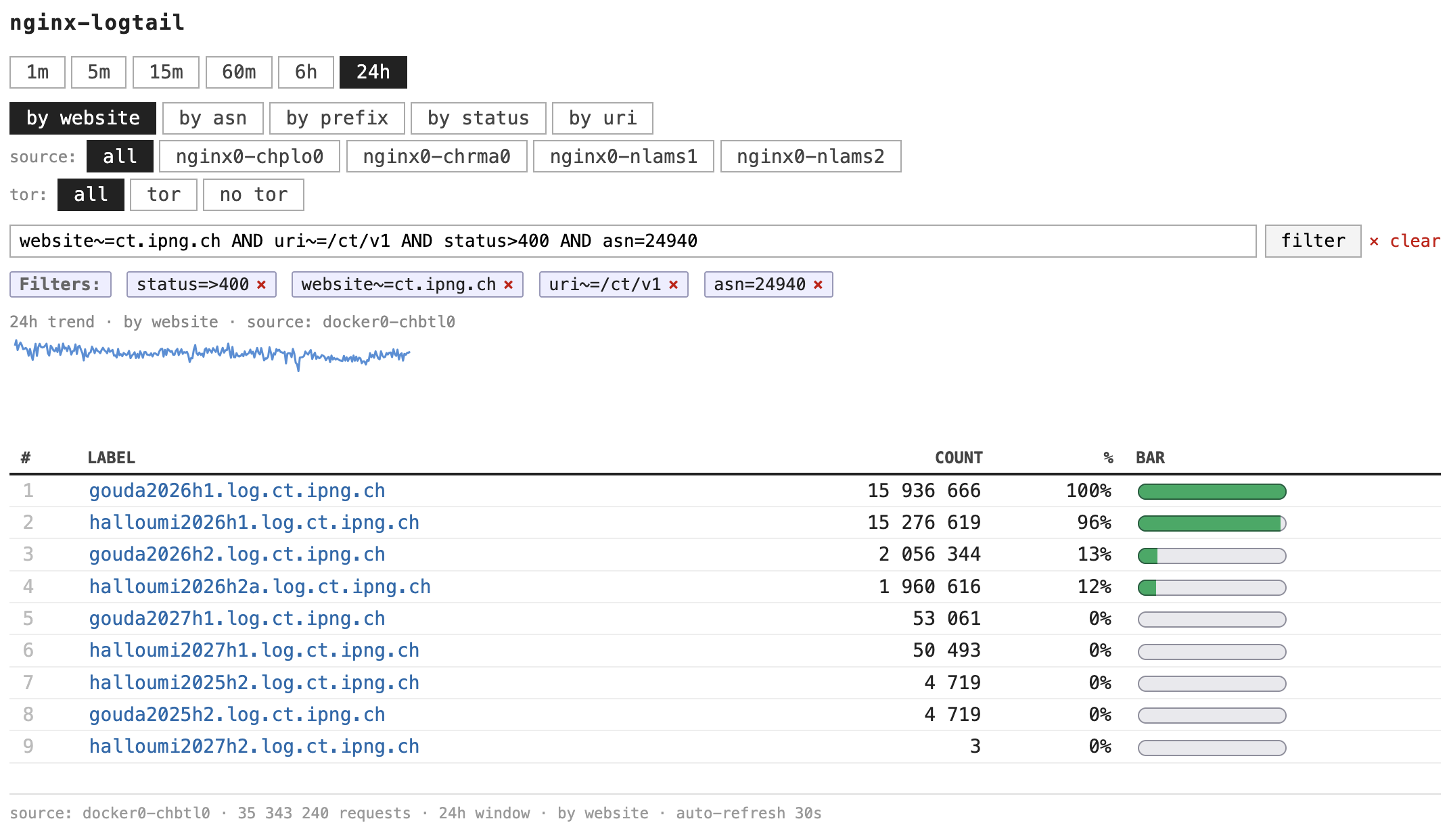

- A log collector that tails NGINX (or Apache) logs and/or receives logs over UDP from

nginx-ipng-stats-plugin, aggregating counts per website, client address, URI, status, ASN, and source tag. It buckets these into windows of 1m, 5m, 15m, 60m, 6h, and 24h and exposes them over gRPC. - An aggregator that subscribes to any number of collectors and serves a merged view on the same gRPC surface.

- An HTTP frontend that renders a drilldown dashboard (zero JavaScript, server-side SVG sparklines) against any collector or the aggregator.

- A CLI for shell queries, returning tables or JSON.

Written in Go, released under [APACHE]. Runs as systemd units, in Docker, or any

combination.

Quick Start

Three deployment flavors. Pick whichever suits the host.

Debian package. Build once, install the .deb on every nginx host (for the collector) and

on one central host (for the aggregator + frontend):

make install-deps # one-time: apt deps, Go toolchain, go tools

make pkg-deb # produces nginx-logtail_<ver>_{amd64,arm64}.deb

# on each nginx host:

sudo dpkg -i nginx-logtail_*_amd64.deb

sudo $EDITOR /etc/default/nginx-logtail # defaults to UDP-only on :9514; set COLLECTOR_LOGS=... to also tail files

sudo systemctl enable --now nginx-logtail-collector.service

# on the central host:

sudo dpkg -i nginx-logtail_*_amd64.deb

sudo systemctl enable --now nginx-logtail-aggregator.service nginx-logtail-frontend.service

# dashboard now at http://<central>:8080

Binaries land at /usr/sbin/nginx-logtail-{collector,aggregator,frontend} and the CLI at

/usr/bin/nginx-logtail. All three services run as the _logtail system user (collector uses

Group=www-data for log access). None are auto-enabled, so installing the package is safe on

any host.

Docker Compose. Runs the aggregator and frontend in one stack; point collectors (on each nginx host) at the aggregator:

AGGREGATOR_COLLECTORS=nginx1:9090,nginx2:9090 docker compose up -d

# frontend on :8080, aggregator gRPC on :9091

From source (make).

make build # build/<arch>/{collector,aggregator,frontend,cli}

make test

./build/*/nginx-logtail -version

make help lists every target.

See [User Guide] for operator-facing documentation, or [Design] for the normative requirements and architectural rationale.